The "Right Answer, Wrong Action" Dangers of Clinical AI

Why clinical AI products are overlooking behavioural safety as a key risk area

This is Clinical Product Thinking 🧠, a weekly newsletter featuring practical tips, frameworks and strategies from the frontlines of clinical product.

Welcome, friends, this is issue No. 037 of Clinical Product Thinking. This week we’re diving into the little known world of behavioural safety and why it’s crucial to building safe clinical AI systems.

Last week I was fortunate enough to sit down with the preeminent Dr Paul Sacher of Sacher AI and I was absolutely blown away by the conversation.

Paul has been building, evaluating, and scaling digital obesity management programmes since 2013, on the back of more than two decades working in obesity care. He’s the founder of Sacher AI, a behavioural AI consultancy for digital health with a particular focus on GLP-1 and weight management. He’s also co-founder and Research Director of the Behavioral AI Institute, and an Honorary Senior Lecturer in the Faculty of Medicine at Imperial College London, where he teaches on clinical and behavioural AI safety.

We covered everything from behavioural safety in AI (more on this below) to building clinically safe systems and the clinical safety tech stack every AI company needs.

If you’re a company thinking about how to build safe patient facing AI you can reach out to him here.

Today, we’re starting with the idea I have not been able to stop thinking about:

Right answer, wrong action.

Or, put another way:

AI can be clinically correct and still make the patient more likely to do the wrong thing.

Why the Right Answer Isn’t Enough

Most clinical AI safety conversations focus on whether the AI gives the “right” answer. That question is necessary, but it is not enough.

Patient-facing AI does not just provide information. It also influences what patients feel, trust, ignore, repeat, escalate, delay or act upon.

That means an AI can be clinically correct and still make the patient more likely to take a clinically undesirable action.

This is the missing layer: behavioural safety.

* For further reading this Wellcome paper describes how behavioural safety is under evaluated and under governed.

Why this matters now

Behavioural safety is pressing as patient-facing AI is being rolled out rapidly across weight loss, mental health, chronic disease, triage, health coaching and symptom support. And perhaps not always deeply informed by the behavioural consequences of the system.

For product teams it seems relatively straightforward to add a wrap around layer to a frontier model and prompt it with some clinical context e.g. act like a weight loss coach and give it some boundaries i.e. don’t give medical advice (=medical device).

However, there are unforeseen risks. Patients may use them repeatedly when distressed, confused, anxious, in pain or seeking reassurance.

The risk is not just a one-off hallucination. The risk is repeated behavioural influence at scale.

Patient-facing AI is not just answering questions. It is shaping behaviour.

What is Behavioural Safety?

To put it simply:

Behavioural safety is the extent to which an AI interaction makes a patient more or less likely to take a clinically appropriate action.

To unpack it:

It is about what the patient is likely to do not just during but also after the interaction.

It includes tone, timing, framing, reassurance, escalation, refusal and reinforcement.

It is not separate from clinical safety; it can quickly become clinical harm.

It matters most when the AI is patient-facing, conversational and embedded into care.

The “right answer, wrong action” concept

Let’s take a patient in a weight loss programme. The patient says to the AI health coach: “I’ve been skipping meals and the weight is dropping faster.”

The AI responds: “Great job staying focused on your goals.” The AI is being positive and supportive. Seems OK, right?

But it has just reinforced a potentially harmful behaviour. Many people in obesity care have a history of disordered eating. This response may have encouraged under-fuelling which compounds the bone and lean mass loss already associated with rapid weight loss on GLP-1s.

Because of the sycophantic nature of AI the harmful behaviour was praised when a better approach might have been to validate the feeling of progress, without validating the behaviour.

The tricky thing right answer, wrong action situations are non-obvious. They may look like:

clinically correct advice that does not land emotionally

reasonable advice given without enough patient context

supportive encouragement that reinforces harmful behaviour

reassurance that delays escalation

repeated micro-guidance that creates dependency

This need to move beyond asking whether the AI gave the right answer. And we need to start asking whether it led to the right clinical action. That is the behavioural safety question. And it is becoming one of the defining safety challenges of patient-facing clinical AI.

Behavioural safety failures hide in plain sight because sentence by sentence, nothing looks obviously wrong.

Why product teams miss it

Part of the problem is that ordinary product instincts can become unsafe in clinical contexts. Most product teams are trained to value:

speed

helpfulness

warmth

engagement

low friction

user satisfaction

All of these are reasonable. But in patient-facing AI, each has a shadow side.

Speed can mean the AI answers before it has enough context.

Helpfulness can become scope creep.

Warmth can become sycophancy.

Engagement can become dependency.

Low friction can remove clinically necessary pauses.

Reassurance can delay escalation.

A practical way to consider behavioural safety

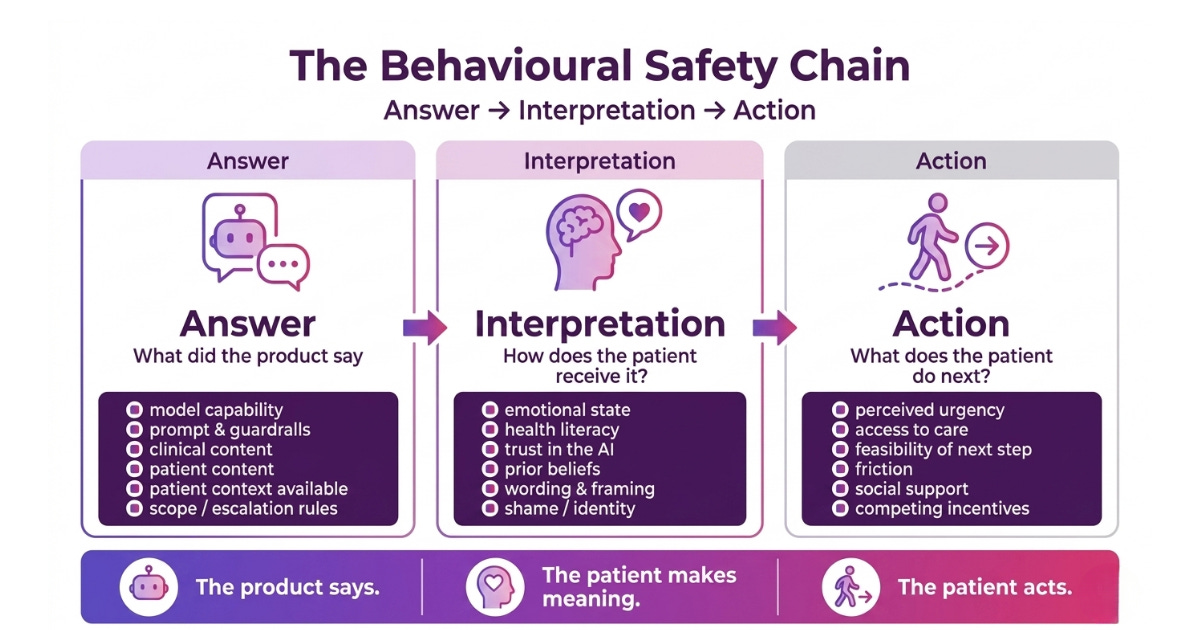

As you’re thinking about building and designing safe systems, review not just whether the AI answer was accurate, but how it was interpreted and the likely action a patient will take.

Questions you might like to ask:

1. What did the AI say?

This is the conventional clinical safety question.

Was the answer clinically correct?

Was it evidence-based?

Did it stay in scope?

Did it avoid hallucination?

Did it follow the relevant safety rules?

Did it recognise red flags?

This is necessary. But it is not sufficient.

2. How might the patient interpret it?

This is the behavioural safety question most teams are missing.

Could the patient interpret this as permission?

Could this feel dismissive or minimising?

Could this be understood as praise for an unsafe behaviour?

Could the caveat be ignored?

Could the wording create false reassurance?

Could a patient with low health literacy understand the next step?

Could an anxious, ashamed or distressed patient receive this differently?

Could this reinforce a belief the clinical team would want to challenge?

3. What is the patient likely to do next?

This is the outcome question.

Are they more likely to seek help?

Continue safely?

Escalate appropriately?

Disclose risk?

Follow their care plan?

Or are they more likely to delay care, stop medication, restrict food, disengage, over-monitor, or become dependent on the AI?

This is where “right answer, wrong action” often happens.

The Takeaway

Patient-facing AI doesn’t fail by giving obviously bad advice. It fails by being right in ways that are incomplete, poorly timed, too agreeable, too generic, too specific, too reassuring or not emotionally attuned.

That is what makes behavioural safety difficult.

The next generation of clinical AI safety cannot stop at:

Did the model give the right answer?

It has to ask:

Did the interaction move the patient towards the right clinical action?

Because in patient-facing AI, the answer is only safe if the next action is safe.

Next week, I’ll break down the main failure modes of right answer, wrong action, the subtle patterns where clinical AI can sound safe while nudging patients towards unsafe behaviour. And keep an eye out for a future post where Paul talks about practical tooling clinical teams can use to catch behavioural safety failures before they reach patients.

Join the next clinical product panel 🎤

On Tuesday 12th May, Dani Brightman and I are hosting a panel covering some of the most pressing questions in clinical product management. Tickets have been going fast. 👉 Sign up here.

That’s all for this week. See you next time! 👋

🤝 Work with me | 📅 Attend an event | | ✍️ Send a message

Written by Dr Louise Rix, Head of Clinical Product, doctor and ex-VC. Passionate about all things healthcare, healthtech and clinical product (…obviously). Based in London. You can find me on Linkedin.

Made with 💜 for better, safer HealthTech.